|

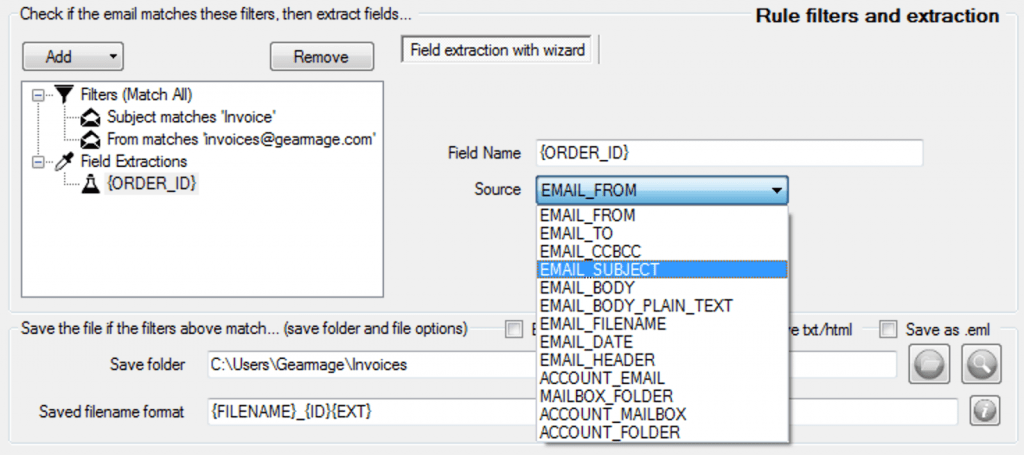

Don't forget to select packages Wget, grep, and sed. If you're running a Windows, consider taking advantage of Cygwin. The Get details of web page action allows you to retrieve various details from web pages and handle them in your desktop flows. Some of the most important tasks for which linkextractor is used are below To find out calculate external and internal link on your webpage. Extracting information regarding web pages is an essential function in most web-related flows. It is 100 free SEO tools it has multiple uses in SEO works. You can specify not only a preceding string for the URL to export, but also a Regular Expression pattern if you use egrep or grep -E in the command given above. link extractor tool is used to scan and extract links from HTML of a web page. Remember to replace with your actual page URL and with the preceding string you want to specify. To extract links from multiple similar pages, for example all questions on the first 10 pages on this site, use a for loop. An Easy to Use tool to Automate Data Extraction Intuitive User Interface and workflow Data Miner has an intuitive UI to help you execute advance data extraction and web crawling. In usual cases there may be multiple tags in one line, so you have to cut them first (the first sed adds newlines before every keyword href to make sure there's no more than one of it in a single line). Data Miner is a Google Chrome Extension and Edge Browser Extension that helps you crawl and scrape data from web pages and into a CSV file or Excel spreadsheet. Choosing the domain option is beneficial if you want to extract all links from a website and identify any existing link issues. Im trying to extract all the URLs that have below format. This method is extremely fast and I use these in Bash functions to format the results across thousands of scraped pages for clients that want someone to review their entire site in one scrape.If you are running on a Linux or a Unix system (like FreeBSD or macOS), you can open a terminal session and run this command: wget -O - | \ def getpage (page): try: import urllib return urllib.urlopen (url).read () except: return '' startlink page.find ('

Once the subset is extracted, just remove the href=" or src=" sed -r 's~(href="|src=")~~g' We do not check the content of the document referenced by this link. For example, you may not want base64 images, instead you want all the other images. What links do we extract Our service parses the provided website page and discover all anchor href attributes. Buy ConvertCSV a Coffee at Step 1: Select. Once the content is properly formatted, awk or sed can be used to collect any subset of these links. Use this tool to extract URLs in web pages, data files, text and more. The awk finds any line that begins with href or src and outputs it. The forward slash can confuse the sed substitution when working with html. This is preferred over a forward slash (/). Notice I'm using a tilde (~) in sed as the defining separator for substitution. The first sed finds all href and src attributes and puts each on a new line while simultaneously removing the rest of the line, inlcuding the closing double qoute (") at the end of the link. Web

This will open up the custom extraction configuration which allows you to configure up to 100 separate ‘extractors’. I scrape websites using Bash exclusively to verify the http status of client links and report back to them on errors found. We can extract all the external links or URLs from a webpage using one of the very powerful tools of Python, known as Web scraping. 1) Click ‘Configuration > Custom > Extraction’ This menu can be found in the top level menu of the SEO Spider.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed